A forum for cross-domain fertilisation in long-range development of computer technology

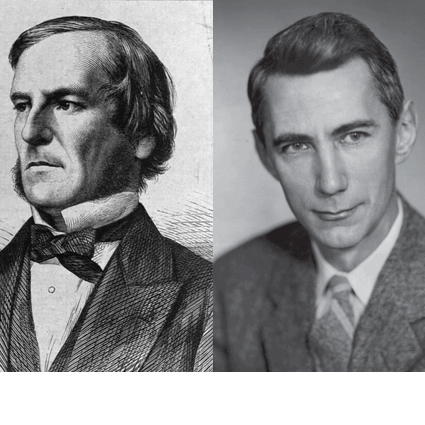

Register now for When Boole Meets Shannon: A forum for cross-domain fertilisation in long-range development of computer technology. Hosted by The Boole Centre for Research in Informatics, the School of Engineering and the Claude Shannon Institute, as well as the School of Mathematical Sciences at University College Cork.

In 2015, University College Cork celebrates the bicentenary of George Boole, 1815-64 with the Boole Conferences 17 August - 5 September. The conference includes a series of workshops coordinated by i-RISC and Midas Ireland – the Microelectronics Industry Association. The event will serve as an interaction forum for information and coding theory, circuits and systems, computer engineering, and communication systems researchers, whose research goals aim to advance knowledge and understanding of reliable computing systems built from unreliable components. Presentations will explore the intertwining relationships between Boolean networks and Shannon information theory. Learn more on the Boole Conference website.

Jack Cowan is a Professor in the Mathematics Department at the University of Chicago. He also has joint appointments in the Department of Neurology and in the Committee on Computational Neuroscience. He was born in the UK and educated both in the UK and at MIT. He is perhaps best known for his work on the Wilson-Cowan equations, his work on Geometric Visual Hallucinations and what they tell us about the human brain, and his recent papers on stochastic Wilson-Cowan equations and their applications.

Jack Cowan is a Professor in the Mathematics Department at the University of Chicago. He also has joint appointments in the Department of Neurology and in the Committee on Computational Neuroscience. He was born in the UK and educated both in the UK and at MIT. He is perhaps best known for his work on the Wilson-Cowan equations, his work on Geometric Visual Hallucinations and what they tell us about the human brain, and his recent papers on stochastic Wilson-Cowan equations and their applications.

Abstract: From Boole To Shannon & Beyond In 1938 C.E.Shannon’s paper “A symbolic analysis of Relay and Switching Circuits” was published in Trans.AIEE. It was one of the first applications of Boolean algebra to problems involving switching circuits. [A similar paper was published in the Soviet Union by V.I. Shestakov in 1941, based on earlier work.] In 1943 W.S. McCulloch and W.H.Pitts published a paper entitled “A Logical Calculus of the Ideas immanent in Nervous Activity” in Bull. Math Biophys. In which they implemented the first order predicate calculus of mathematical logic in switching circuits based on primitive models of nerve cells. McCulloch and Pitts showed that a network of such cells, if provided with an indefinitely large memory, could function as a universal Turing machine. Following this, in 1952 J von Neumann showed how to construct networks of McCulloch-Pitts elements that were fault and failure tolerant. Von Neumann’s paper on this topic was included in a collection of papers edited by Shannon and J. McCarthy and published in “Automata Studies” in 1956. This was followed in 1963 by the MIT Press monograph “Reliable Computation in the Presence of Noise” written by S. Winograd and J.D. Cowan which showed how Shannon’s work on Information Theory could be used to improve on the efficacy of von Neumann’s networks. In the talk to be given at this workshop I will summarize much of this history and attempt to relate it to more modern works on this and related topics concerning machine learning, and the neuronal networks of the brain.

Lav R. Varshney is an assistant professor in the Department of Electrical and Computer Engineering, a research assistant professor in the Coordinated Science Laboratory, and a research affiliate in the Beckman Institute for Advanced Science and Technology, all at the University of Illinois at Urbana-Champaign.He received the B. S. degree with honors in electrical and computer engineering (magna cum laude) from Cornell University, Ithaca, New York in 2004. He received the S. M., E. E., and Ph. D. degrees in electrical engineering and computer science from the Massachusetts Institute of Technology (MIT), Cambridge in 2006, 2008, and 2010, respectively. He was a research staff member at the IBM Thomas J. Watson Research Center, Yorktown Heights, NY from 2010 until 2013. His research interests include information and coding theory, signal processing and data analytics, collective intelligence in sociotechnical systems, neuroscience, and creativity.

Lav R. Varshney is an assistant professor in the Department of Electrical and Computer Engineering, a research assistant professor in the Coordinated Science Laboratory, and a research affiliate in the Beckman Institute for Advanced Science and Technology, all at the University of Illinois at Urbana-Champaign.He received the B. S. degree with honors in electrical and computer engineering (magna cum laude) from Cornell University, Ithaca, New York in 2004. He received the S. M., E. E., and Ph. D. degrees in electrical engineering and computer science from the Massachusetts Institute of Technology (MIT), Cambridge in 2006, 2008, and 2010, respectively. He was a research staff member at the IBM Thomas J. Watson Research Center, Yorktown Heights, NY from 2010 until 2013. His research interests include information and coding theory, signal processing and data analytics, collective intelligence in sociotechnical systems, neuroscience, and creativity.

Abstract: Toward Fundamental Limits of Reliable Memories Built from Unreliable Components There has been long-standing interest in constructing reliable memory systems from unreliable components like noisy bit-cells and noisy logic gates, under circuit complexity constraints. Prior work has focused exclusively on constructive achievability results, but here we develop converse theorems for this problem for the first time. The basic technique relies on entropy production/dissipation arguments and balances the need to dissipate entropy with the redundancy of the code employed. A bound from the entropy dissipation capability of noisy logic gates is used via a sphere-packing argument. Although a large gap remains between refined achievability results stated herein and the converse, some suggestions for ways to move forward beyond this first step are provided. This work was supported in part by Systems on Nanoscale Information fabriCs (SONIC), one of the six SRC STARnet Centers, sponsored by MARCO and DARPA.

Luca Benini is Professor of digital Circuits and systems at ETHZ. He is also professor at University of Bologna and he is currently serving as Chief Architect for the Platform2012/ST HORM project in STmicroelectronics, Grenoble. He received a Ph.D. degree in electrical engineering from Stanford University in 1997. Dr. Benini's research interests are in the design of systems for ambient intelligence, from multi-processor systems-on-chip/networks on chip to energy-efficient smart sensors and sensor networks.From there his research interest have spread into the field of biochips for the recognition of biological molecules, and into bioinformatics for the elaboration of the resulting information and further into more advanced algorithms for in silico biology. He has published more than 300 papers in peer-reviewed international journals and conferences, three books, several book chapters and two patents.

Luca Benini is Professor of digital Circuits and systems at ETHZ. He is also professor at University of Bologna and he is currently serving as Chief Architect for the Platform2012/ST HORM project in STmicroelectronics, Grenoble. He received a Ph.D. degree in electrical engineering from Stanford University in 1997. Dr. Benini's research interests are in the design of systems for ambient intelligence, from multi-processor systems-on-chip/networks on chip to energy-efficient smart sensors and sensor networks.From there his research interest have spread into the field of biochips for the recognition of biological molecules, and into bioinformatics for the elaboration of the resulting information and further into more advanced algorithms for in silico biology. He has published more than 300 papers in peer-reviewed international journals and conferences, three books, several book chapters and two patents.

Abstract: Ultra-Low Power Design for the IoT - an "Adequate Computing" perspective The "internet of everything" envisions trillions of connected objects loaded with high-bandwidth sensors requiring massive amounts of local signal processing, fusion, pattern extraction and classification. Higher level intelligence, requiring local storage and complex search and matching algorithms, will come next, ultimately leading to situational awareness and truly "intelligent things" harvesting energy from their environment.

From the computational viewpoint, the challenge is formidable and can be addressed only by pushing computing fabrics toward massive parallelism and brain-like energy efficiency levels. We believe that CMOS technology can still take us a long way toward this vision. Our recent results with the PULP (parallel ultra-low power) open computing platform demonstrate that pj/OP (GOPS/mW) computational efficiency is within reach in today's 28nm CMOS FDSOI technology. In the longer term, looking toward the next 1000x of energy efficiency improvement, we will need to fully exploit the flexibility of heterogeneous 3D integration, stop being religious about analog vs. digital, Von Neumann vs. "new" computing paradigms, and seriously look into relaxing traditional "hardware-software contracts" such as numerical precision and error-free permanent storage.

Amin Shokrollahi finished his PhD at the University of Bonn in 1991. From 1991 to 1995 he was an assistant at the same university, before moving to the International Computer Science Institute in Berkeley in 1995. In 1998 he joined the Bell Laboratories as a Member of the Technical Staff. In 2000 he joined the startup company Digital Fountain as their Chief Scientist, a position he had until Digital Fountain’s acquisition by Qualcomm in 2009. In 2003 he joined EPFL where he has the chairs of Algorithmic Mathematics in the Math department, and algorithmics in the Computer Science department. In 2011 and 2012 he co-founded the company Kandou Bus which uses novel approaches from discrete mathematics, algorithm design, and electronics for the design of fast and energy efficient chip-to-chip communication links. Amin’s research interests are varied and cover coding theory, discrete mathematics, algorithm design, theoretical computer science, signal processing, networking, computational algebra, algebraic complexity theory, and most recently, electronics, areas where he has more than 100 publications, and more than 70 pending and granted patent applications.

Amin Shokrollahi finished his PhD at the University of Bonn in 1991. From 1991 to 1995 he was an assistant at the same university, before moving to the International Computer Science Institute in Berkeley in 1995. In 1998 he joined the Bell Laboratories as a Member of the Technical Staff. In 2000 he joined the startup company Digital Fountain as their Chief Scientist, a position he had until Digital Fountain’s acquisition by Qualcomm in 2009. In 2003 he joined EPFL where he has the chairs of Algorithmic Mathematics in the Math department, and algorithmics in the Computer Science department. In 2011 and 2012 he co-founded the company Kandou Bus which uses novel approaches from discrete mathematics, algorithm design, and electronics for the design of fast and energy efficient chip-to-chip communication links. Amin’s research interests are varied and cover coding theory, discrete mathematics, algorithm design, theoretical computer science, signal processing, networking, computational algebra, algebraic complexity theory, and most recently, electronics, areas where he has more than 100 publications, and more than 70 pending and granted patent applications.

Abstract: Chordal Codes for Chip-to-Chip Communication Modern electronic devices consist of a multitude of IC components: the processor, the memory, the RF modem and the baseband chip (in wireless devices), and the graphics processor, are only some examples of components scattered throughout a device. The increase of the volume of digital data that needs to be accessed and processed by such devices calls for ever faster communication between these IC's. Faster communication, however, often translates to higher susceptibility to various types of noise, and inevitably to a higher power consumption in order to combat the noise. This increase in power consumption is, for the most part, far from linear, and cannot be easily compensated for by Moore's Law. In this talk I will give a short overview of problems encountered in chip-to-chip communication, and will advocate the use of novel coding techniques to solve those problems. I will also briefly talk about Kandou Bus, and some of the approaches the company is taking to design, implement, and market such solutions.

Bernd Steinbach studied Information Technology at the University of Technology in Chemnitz (Germany) and graduated with an M.Sc. in 1973. He graduated with a Ph.D. and with a Dr. sc. techn. (Doctor scientiae technicarum) for his second doctoral thesis from the Faculty of Electrical Engineering of the Chemnitz University of Technology in 1981 and 1984, respectively. In 1991 he obtained the Habilitation (Dr.-Ing. habil.) from the same Faculty. Since 1992 he is a Full Professor of Computer Science / Software Engineering and Programming at the Freiberg University of Mining and Technology, Department of Computer Science. He is the editor and author of several chapters of the book "Recent Progress in the Boolean Domain", Cambridge Scholars Publishing 2014. He has published more than 240 chapters in books, complete issues of journals, and papers in journals and proceedings. He is the initiator and general chair of a biennial series of International Workshops on Boolean Problems (IWSBP) which started in 1994, with 11 workshops until now.

Abstract: Simpler Decomposition Functions Detected by Means of the Boolean Differential Calculus The main idea of a bi-decomposition consists in splitting a given Boolean function f(x) into two decomposition functions g(x) and h(x). A recursive synthesis method based on the bi-decomposition find a complete circuit if the decomposition functions g(x) and h(x) are simpler than the given function f(x). There are both linear and non-linear bi-decompositions. Böhlau suggested in his PhD thesis three types of strong bi-decomposition: the non-linear AND-bi-decomposition, the non-linear OR-bi-decomposition, as well as the linear EXOR-bi-decomposition. Such bi-decompositions exists for functions f(x) which satisfy certain conditions. These conditions can be expressed by means of the Boolean Differential Calculus. The big advantage of all strong bi-decompositions is that both the decomposition function g(x) and the decomposition function h(x) depend on less variables than the given functions f(x). In this way the needed simplification is reached. The drawback of the strong bi-decompositions is that there are Boolean functions f(x) for which no strong bi-decomposition exists. He suggested in his PhD thesis weak bi-decompositions. The linear EXOR-bi-decomposition exists in any case; unfortunately the decomposition function are not simpler in this case. Therefore, this type of bi-decomposition must be excluded from a synthesis approach based on bi-decomposition. However, Le found also the non-linear weak AND-bi-decomposition and the non-linear weak OR-bi-decomposition which simplify the decomposition functions g(x) and h(x), where g(x) can be chosen out of a larger lattice of functions and h(x) depends of less variables. The main result from Le is the proof that for each Boolean function there exists either a strong EXOR-bi-decomposition or a weak AND-bi-decomposition or a weak OR-bi-decomposition. Hence, there is a complete synthesis approach based on strong and weak bi-decomposition.

Based on his results about more general Boolean lattices, Steinbach found as supplement to the known bi-decompositions the new vectorial bi-decompositions. The more general indicator for simplification is whether any vectorial derivative is equal to zero. The so far known bi-decompositions utilize only simple derivatives which can be seen as a small subset of all vectorial derivatives. Practical examples confirm the benefit of the common use of all bi-decompositions.

The Workshop organizers acknowledge support from the European Union’s Seventh Framework Programme for Research and Technological Development, under Grant Agreement number 309129 (i-RISC project).

i-RISC Workshop Local Organizing Committee:

Dr. Emanuel Popovici, School of Engineering-Electrical and Electronic (E.Popovici@ucc.ie)

Dr. Liam Marnane, School of Engineering-Electrical and Electronic

Dr. Kevin McCarthy, School of Engineering-Electrical and Electronic

i-RISC Workshop Technical Committee:

Sorin Cotofana, TU Delft, Nederlands

Bane Vasic, University of Arizona, USA

Valentin Savin, CEA-Leti (co-chair), France (valentin.savin@cea.fr)

Alexandru Amaricai, University Politehnica Timisoara, Romania

Predrag Ivanis, University of Belgrade, Serbia

David Declerq, ENSEA Paris, France

Goran Djordjevic, University of Nis, Serbia

Emanuel Popovici, University College Cork, Ireland